Facial recognition use by South Wales Police ruled unlawful

- Published

Ed Bridges has had his image captured twice by AFR technology, which he said breached his human rights

The use of automatic facial recognition (AFR) technology by South Wales Police is unlawful, the Court of Appeal has ruled.

It follows a legal challenge brought by civil rights group Liberty and Ed Bridges, 37, from Cardiff.

But the court also found its use was proportionate interference with human rights as the benefits outweighed the impact on Mr Bridges.

South Wales Police said it would not be appealing the findings.

Mr Bridges had said being identified by AFR caused him distress.

The South Wales force has previously demonstrated the technology with a member of staff standing in

The court upheld three of the five points raised in the appeal.

It said there was no clear guidance on where AFR Locate could be used and who could be put on a watchlist, a data protection impact assessment was deficient and the force did not take reasonable steps to find out if the software had a racial or gender bias.

The appeal followed the dismissal of Mr Bridges' case at London's High Court in September by two senior judges, who had concluded use of the technology was not unlawful.

Responding to Tuesday's ruling, South Wales Police Chief Constable Matt Jukes said: "The test of our ground-breaking use of this technology by the courts has been a welcome and important step in its development. I am confident this is a judgment that we can work with."

'Delighted'

Mr Bridges said: "I'm delighted that the court has agreed that facial recognition clearly threatens our rights.

"This technology is an intrusive and discriminatory mass surveillance tool.

"For three years now, South Wales Police has been using it against hundreds of thousands of us, without our consent and often without our knowledge.

"We should all be able to use our public spaces without being subjected to oppressive surveillance."

Mr Bridges' face was scanned while he was Christmas shopping in Cardiff in 2017 and at a peaceful anti-arms protest outside the city's Motorpoint Arena in 2018.

He had argued it breached his human rights when his biometric data was analysed without his knowledge or consent.

Liberty lawyer Megan Goulding described the judgment as a "major victory in the fight against discriminatory and oppressive facial recognition".

She added: "It is time for the government to recognise the serious dangers of this intrusive technology. Facial recognition is a threat to our freedom - it has no place on our streets."

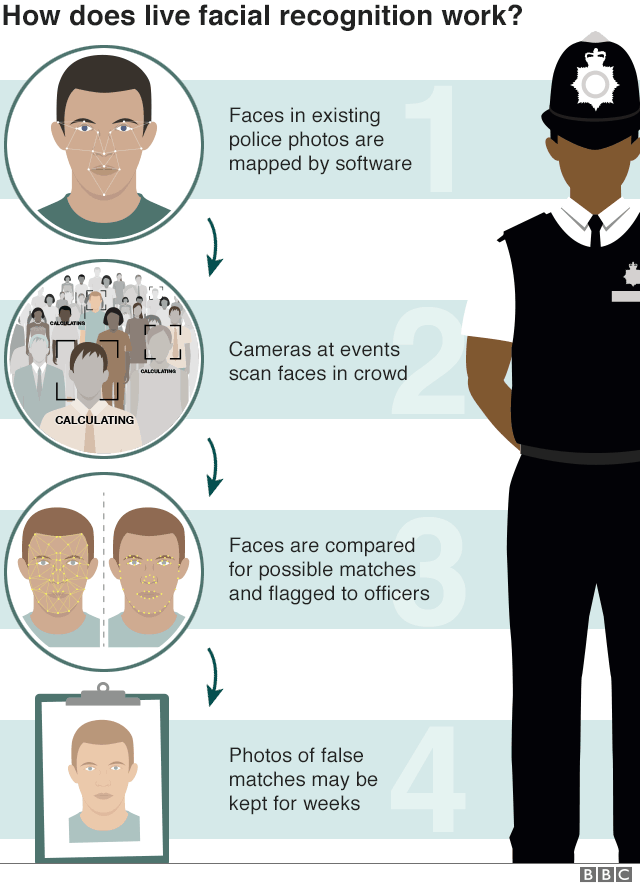

The technology maps faces in a crowd by measuring the distance between features, then compares results with a "watch list" of images - which can include suspects, missing people and persons of interest.

South Wales Police has been trialling this form of AFR since 2017, predominantly at big sporting fixtures, concerts and other large events across the force area.

The force had confirmed Mr Bridges was not a person of interest and had never been on a watch list.

Responding to the ruling, the force said its use of the technology had resulted in 61 people being arrested for offences including robbery and violence, theft and court warrants.

It said it remained "completely committed to its careful development and deployment" and was "proud of the fact there has never been an unlawful arrest as a result of using the technology in south Wales".

During the remote hearing last month, Liberty's barrister Dan Squires QC argued that if everyone was stopped and asked for their personal data on the way into a stadium, people would feel uncomfortable.

"If they were to do this with fingerprints, it would be unlawful, but by doing this with AFR there are no legal constraints," he said, as there are clear laws and guidance on taking fingerprints.

Mr Squires said it was the potential use of the power, not its actual use to date, that was the issue.

"It's not enough that it has been done in a proportionate manner so far," he said.

He argued there were insufficient safeguards within the current laws to protect people from an arbitrary use of the technology, or to ensure its use is proportional.

The impact of the ruling will extend to other police forces. But what it has not done is create an insurmountable barrier to them using live facial recognition in the future.

In fact, the judges state that the benefits of the tech, external are "potentially great" and the intrusion into innocent people's privacy "minor".

But their determination expresses a need for more care. Police forces - including London's Met, which has trialled a similar system - need clearer guidance.

Specifically, the ruling indicates officers will have to clearly document who they are looking for and what evidence they have that those targets are likely to be in the monitored area.

They will also need to check that the software doesn't exhibit racial or sexual bias as to who it flags.

Tony Porter - England and Wales' Surveillance Camera Commissioner - has said he hopes the Home Office will take this opportunity, external to update a "woefully" out-of-date code of practice used to regulate facial recognition and other surveillance efforts.

That echoes a call by the House of Commons' Science and Technology committee last year, which called for all use of automatic facial recognition to be suspended until relevant regulations had been put in place.

Elsewhere, a committee of MSPs have made it clear they think it would be premature for the police in Scotland to use the tech in its current state, and Northern Ireland has long-standing plans to create its own Biometrics Commissioner, who might eventually examine the issue.

- Published11 August 2020

- Published15 May 2019

- Published21 May 2019

- Published4 September 2019