The self-driving trucks that are deliberately crashed

- Published

The truck self-driving system of Canadian firm Waabi has started work with Uber Freight

The developers of self-driving trucks don't usually like to see them crash in testing - there would be a lot of mangled metal and the risk of causing serious injury or worse.

Instead the hope is very much that they don't have an accident.

Yet one company at the forefront of autonomous lorries, Waabi, is not only happy to crash the vehicles over and over again in testing, it says this is essential, as you need to know what will happen in different accident situations.

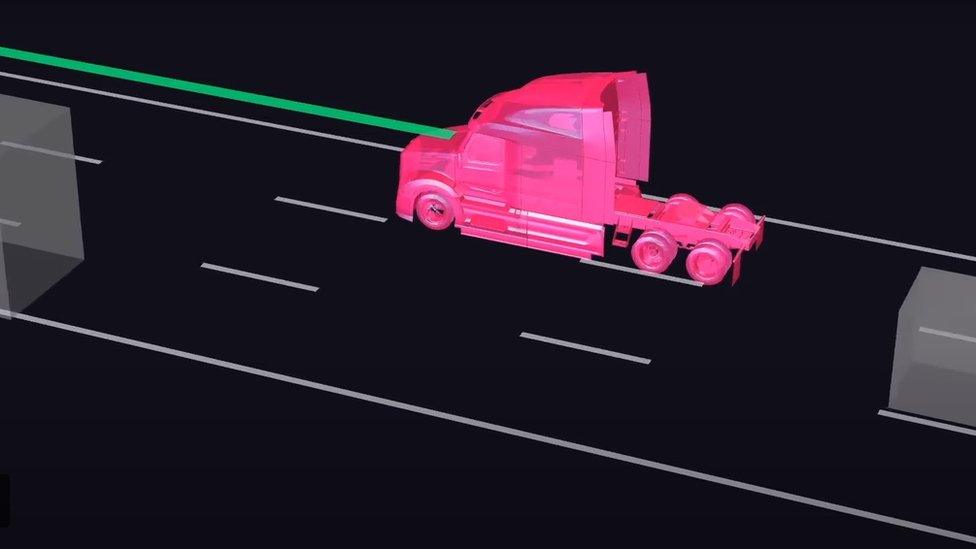

Waabi is able to do this, because instead of only testing real vehicles on real roads, it does the vast majority of such work on digital versions of the trucks in its AI-powered simulator. And it is the computerised lorries that it will prang.

The Canadian firm says its simulator - Waabi World - is so accurate and realistic that it replicates real world conditions. Endless scenarios can be quickly created by the AI software, such as computerised cars mindlessly crossing lanes in the road ahead, or the lorry having to brake sharply after a pedestrian walks into the road.

This creates all sorts of useful data which, for example, can show where to place sensors on the truck or how to handle situations that require split-second braking and manoeuvring.

The data is fed into Waabi's real world system called Waabi Driver, which has now started commercial operations in Texas with Uber Freight.

Self-driving lorries are allowed under Texas law,, external and they do not require a human being to be in the cab as a safety backup. However, Waabi says that a human will be behind the wheel of the Uber Freight vehicles, at least to begin with.

Waabi does most of its testing work in a digital world

"Some other companies like to brag that they've driven millions of miles in the real world testing their self-driving systems," says Raquel Urtasan, the founder of Waabi. "But in my opinion that's a minus, not a plus.

"They can't create accidents to see how their vehicles will react. It's one of the biggest bottlenecks in self-driving today."

Waabi was founded in 2021, and Ms Urtasan says its digital approach has enabled it to develop its self-driving system far more quickly than rivals. It has also helped it secure investment, external from Swedish truck manufacturer Volvo.

While other self-driving tech firms such as Google's Waymo, General Motor's Cruise, and Aurora also now use AI simulators, Waabi say its difference is that its development started - and mostly remained - in a simulator rather than with a real-world truck.

Some critics question, external whether it is possible for a simulator, no matter how advanced, to replicate real world situations - and even Waabi does real world testing on top.

The company is an example of a tech firm using AI to create something called "synthetic data". This is data that has been created artificially, but can then be used in a real world application.

Another company at the forefront of the growing use of synthetic data is Silicon Valley-based Synthesis AI. It specialises in making AI-powered facial recognition systems - the tech that allows a camera and computer to identity you by your face.

This can be everything from Apple's Face ID system to get into your mobile phone, to the cameras at airports that match your face to the photo on your passport.

Until very recently, to train such facial recognition systems you'd have to photograph as many people as you could get hold of.

"The way you would solve that in the real world would be to recruit people, get waivers, bring them into a lab, image them, and make sure you capture as much variability as possible with movement and lighting," says Yashar Behzadi, Synthesis AI's chief executive.

He adds that getting hold of volunteers was extremely difficult during Covid, which was also the time that they really needed them, as recognition systems had to be strengthened to be able to cope with people wearing face masks.

To solve the problem of finding sufficient real people to photograph Synthesis AI, and other firms, have instead turned to using artificial data to train their systems.

Synthesis AI has created a computerised, 3D world, where "hundreds of thousands" of different digital faces can be created and then used test and strengthen the AI.

Synthesis AI uses synthetic data to train its facial recognition software

Mr Behzadi says that his firm's system has been trained to recognise "5,000 facial landmarks", instead of the 68 used in older systems , externalthat relied on real world data for their development.

He adds that using artificial data to train facial recognition systems also helps them to be better able to identify people with darker skin. There have long been claims that some existing systems are worse at identifying people of colour.

"Synthetic data is really primed for this... you can explicitly craft your representation across age, gender, skin tone, ethnicity, and more nuanced features, so that you know your system isn't going to be biased by design."

Synthesis AI's customers include Apple, Google, Amazon, Intel, Ford, and Toyota. Mr Behzadi adds that another benefit of using synthetic data is you don't need to capture or keep any real world consumer data. "So you are building privacy into product systems."

However, not everyone welcomes the development of synthetic data. "It isn't a miracle solution to data privacy and AI harms," says Grant Fergusson, a lawyer who specialises on the global impact of AI.

"I actually think it can make things worse. To use synthetic data responsibly, an AI developer needs to understand its limitations.

"Real world data can inject bias into AI models by reflecting historical biases and prejudices. Synthetic data can inject bias into AI models by naively attempting to reflect this biased, real-world data."

Yet Mr Fergusson, who works for the Washington DC-based research group Electronic Privacy Information Center, does add that "responsible use of synthetic data can still be a valuable tool for creating less biased and less privacy-invasive AI systems."